AI CYBER ARSENAL UNLEASHED: HOW HACKERS WEAPONIZE ARTIFICIAL INTELLIGENCE FOR STEALTH ATTACKS

The cyber battlefield has just undergone a seismic, silent shift. Forget the crude ransomware notes and clumsy phishing attempts of yesteryear. A new generation of AI-enabled cyber attacks is here, leveraging machine learning to conduct hyper-personalized, devastatingly effective campaigns that traditional security tools are blind to. This isn't a future threat; it's the present danger, rewriting the rules of cybersecurity in real-time.

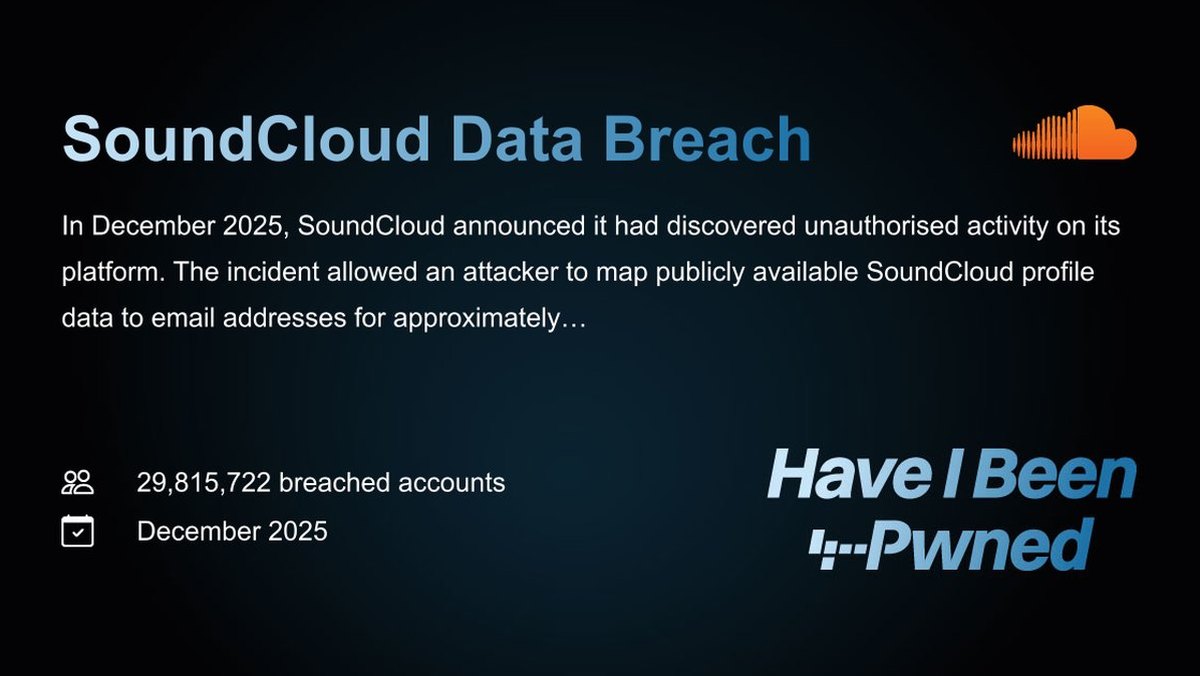

At the core of this revolution is AI's ability to mimic legitimacy. Cybercriminals are now deploying AI to generate phishing emails so personalized they bypass all standard filters, using public data to impersonate executives and reference real company events. This psychological manipulation, often without a single piece of malware, leads directly to catastrophic data breaches and credential theft. Furthermore, AI is supercharging malware development, enabling the automated creation of infinite variants that evade signature-based detection, turning every unknown file into a potential zero-day exploit waiting to happen.

The most insidious threat is in identity security. AI-enhanced credential abuse can now perfectly mimic human login patterns, optimizing attacks to avoid lockout thresholds and specifically targeting privileged accounts. Because these attacks use stolen but valid credentials, they blend seamlessly into normal network traffic, rendering perimeter defenses useless. "We are moving from detecting malicious code to detecting malicious intent," explains a senior threat analyst at a leading security firm. "The old rulebooks are being burned by AI."

Every organization is now a target. The scale and precision of these attacks mean that no one is too small or too obscure. A single AI-crafted phishing message can compromise an entire network, leading to ransomware deployment or a stealthy, long-term data breach. The financial and reputational stakes have never been higher.

We predict a brutal consolidation in the security industry within 18 months. Companies relying solely on legacy, rule-based models will fall victim to these adaptive attacks. The only viable defense will be a new paradigm of dynamic, identity-centric behavioral analytics that can spot the subtle inconsistencies of an AI impostor in real-time, a concept that must extend to securing the very foundations of decentralized finance through advanced blockchain security.

The age of automated, intelligent offense has begun. The question is, can defense evolve fast enough?